The Trainer use actually the best chatgpt alternative model on huggingface. Here is the Training notice from Original Source:

This model was trained for 402 billion tokens over 383,500 steps on TPU v3-256 pod. It was trained as an autoregressive language model, using cross-entropy loss to maximize the likelihood of predicting the next token correctly.

https://huggingface.co/EleutherAI/gpt-j-6b#training-procedure

Dataset Links: https://d8devs.com/chameleon-base-and-chameleon-shop-datasets-20230530-1918/

import os

import pandas as pd

import torch

from sklearn.model_selection import train_test_split

from transformers import TrainingArguments, Trainer, AutoModelForCausalLM, AutoTokenizer

checkpoint = "EleutherAI/gpt-j-6b" # Model checkpoint updated

device = "cuda" if torch.cuda.is_available() else "cpu"

model = AutoModelForCausalLM.from_pretrained(checkpoint, trust_remote_code=True)

tokenizer = AutoTokenizer.from_pretrained(checkpoint, trust_remote_code=True)

if tokenizer.pad_token is None:

tokenizer.pad_token = tokenizer.eos_token

model = AutoModelForCausalLM.from_pretrained(checkpoint, trust_remote_code=True)

model.to(device)

# Load your data

data = pd.read_csv(os.getcwd() + 'chameleon_base_dataset20230530-1942.csv')

# Prepare your data

# You need to decide how to use your CSV data to create training examples for the model.

# For example, you might concatenate the 'description', 'code', and 'explanation' fields into a single string.

texts = data['description'] + ' ' + data['code'] + ' ' + data['explanation']

labels = data['classname']

# Tokenize your data

inputs = tokenizer(texts.tolist(), padding=True, truncation=True, max_length=512, return_tensors='pt')

inputs['labels'] = torch.tensor(labels.tolist()) # assuming labels are numerical

# Split data into training and validation sets

train_inputs, val_inputs, train_labels, val_labels = train_test_split(inputs, labels, test_size=0.2)

# Define training arguments

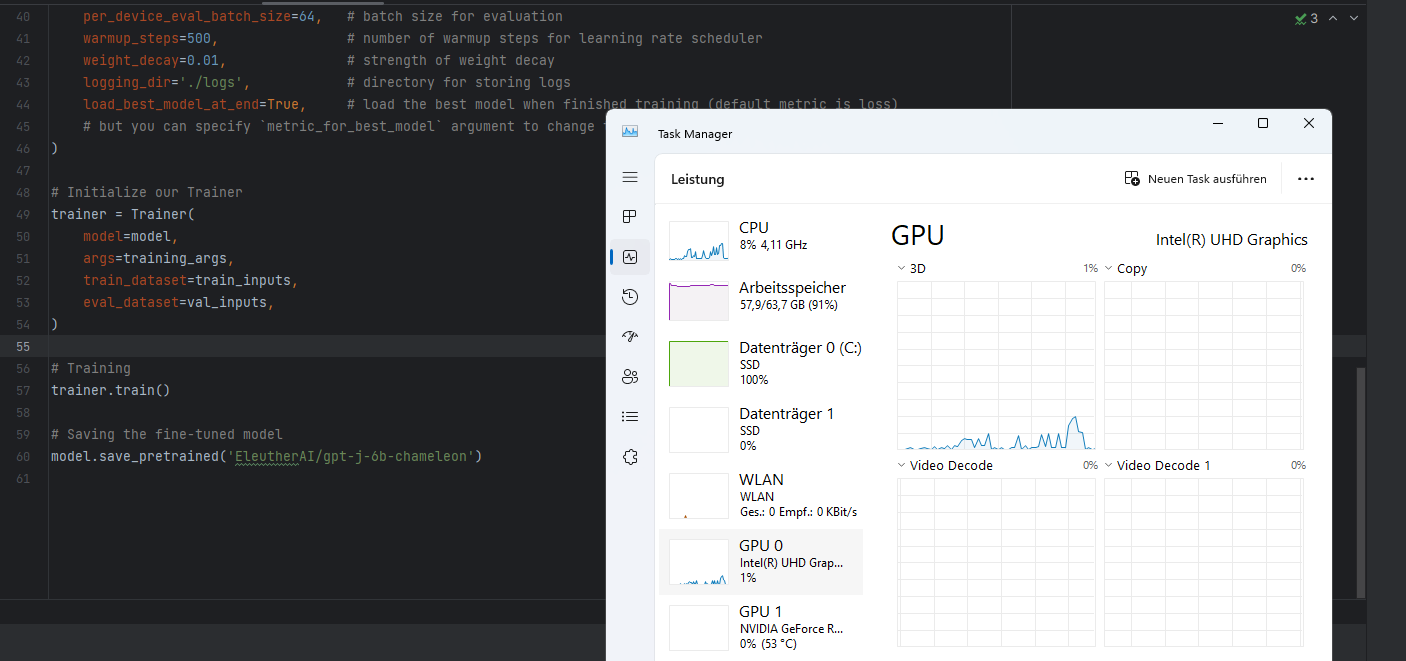

training_args = TrainingArguments(

output_dir='./results', # output directory

num_train_epochs=3, # total number of training epochs

per_device_train_batch_size=16, # batch size per device during training

per_device_eval_batch_size=64, # batch size for evaluation

warmup_steps=500, # number of warmup steps for learning rate scheduler

weight_decay=0.01, # strength of weight decay

logging_dir='./logs', # directory for storing logs

load_best_model_at_end=True, # load the best model when finished training (default metric is loss)

# but you can specify `metric_for_best_model` argument to change to accuracy, f1, etc.

)

# Initialize our Trainer

trainer = Trainer(

model=model,

args=training_args,

train_dataset=train_inputs,

eval_dataset=val_inputs,

)

# Training

trainer.train()

# Saving the fine-tuned model

model.save_pretrained('EleutherAI/gpt-j-6b-chameleon')

Views: 17